配置Keepalived双实例高可用Nginx

我们知道Keepalived原生设计目的是为了高可用LVS集群的,但Keepalived除了可以高可用LVS服务之外,还可以基于vrrp_script和track_script高可用其它服务,如Nginx等。本篇主要演示如何使用Keepalived高可用Nginx服务(双实例),关于vrrp_script、track_script的更多介绍可以见上一篇博客《Keepalived学习总结》。

实验要求 ==> 实现Keepalived基于vrrp_script、track_script高可用Nginx服务,要求配置为双实例,两个vrrp实例的VIP分别为192.168.10.77、192.168.10.78,可以分别将其配置在网卡别名上。

实验环境 ==> CentOS 7.x

实验目的 ==> 实现Keepalived双实例高可用Nginx服务

实验主机 ==> 192.168.10.6(主机名:node1)、192.168.10.8(主机名:node2)

实验前提 ==> 高可用对之间时间同步(可通过周期性任务来实现)

实验操作如下。

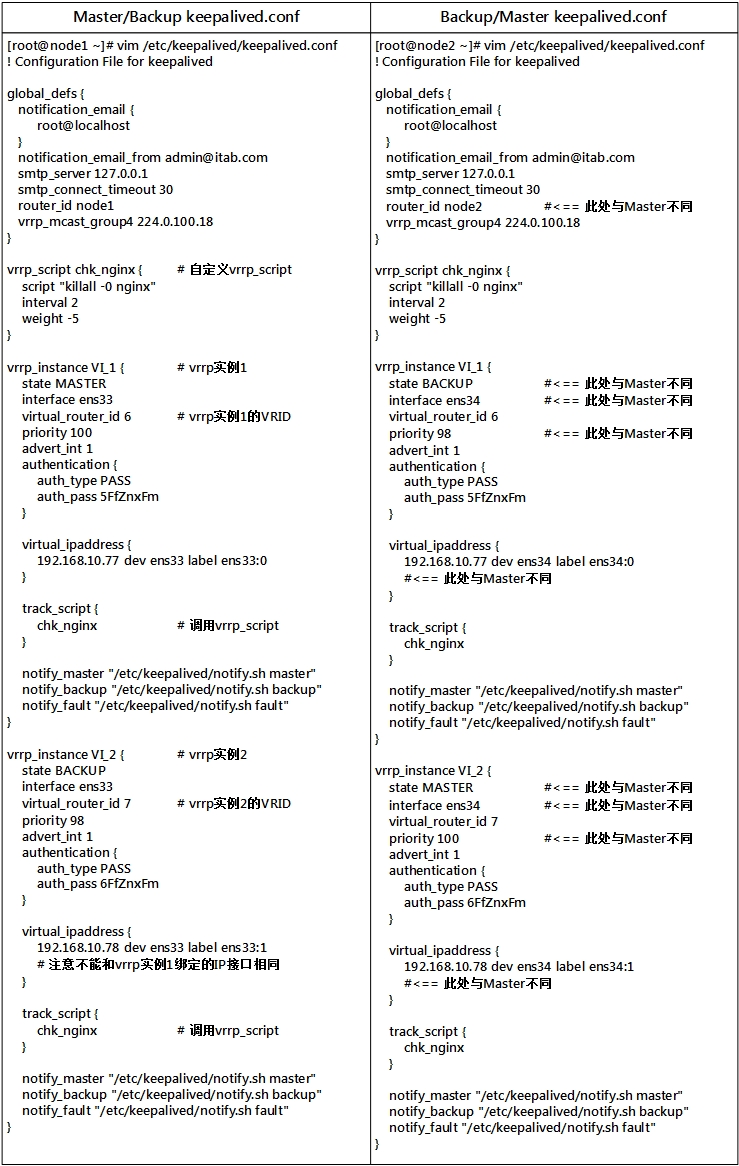

1、编辑Keepalived配置文件

2、在两个节点上分别使用yum安装Nginx,并启动Web服务

(1) 在node1(192.168.10.6)上

[root@node1 ~]# yum -y install nginx [root@node1 ~]# systemctl start nginx.service [root@node1 ~]# ss -tnl | grep :80 LISTEN 0 128 *:80 *:* LISTEN 0 128 :::80 :::*

(2) 在node2(192.168.10.8)上

[root@node2 ~]# yum -y install nginx [root@node2 ~]# systemctl start nginx.service [root@node2 ~]# ss -tnl | grep :80 LISTEN 0 128 *:80 *:*

3、在两个节点上分别启动Keepalived服务

(1) 在node1(192.168.10.6)上

[root@node1 ~]# systemctl start keepalived [root@node1 ~]# ifconfig ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.6 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:f7:b3:4e txqueuelen 1000 (Ethernet)RX packets 46064 bytes 21104876 (20.1 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 43548 bytes 3735943 (3.5 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0ens33:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.77 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:f7:b3:4e txqueuelen 1000 (Ethernet)lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 195 bytes 12522 (12.2 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 195 bytes 12522 (12.2 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

(2) 在node2(192.168.10.8)上

[root@node2 ~]# systemctl start keepalived [root@node2 ~]# ifconfig ens34: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.8 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)RX packets 60646 bytes 17433464 (16.6 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 29539 bytes 2636829 (2.5 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0ens34:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.78 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 319 bytes 20447 (19.9 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 319 bytes 20447 (19.9 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

可以发现,因为在第一个vrrp实例(VIP为192.168.10.77)中,node1节点为主节点,因此该VIP配置在node1上;而在第二个vrrp实例(VIP为192.168.10.78)中,node2节点为主节点,因此该VIP配置在node2上。

4、人为制造故障进行测试

(1) 在node2上杀死nginx进程,查看第二个vrrp实例的VIP(192.168.10.78)是否会漂移至node1。

[root@node2 ~]# killall nginx

#查看日志

[root@node2 ~]# tail /var/log/messages ...(其他省略)... Aug 8 16:29:28 node2 Keepalived_vrrp[6832]: VRRP_Script(chk_nginx) failed Aug 8 16:29:30 node2 Keepalived_vrrp[6832]: VRRP_Instance(VI_2) Received higher prio advert Aug 8 16:29:30 node2 Keepalived_vrrp[6832]: VRRP_Instance(VI_2) Entering BACKUP STATE Aug 8 16:29:30 node2 Keepalived_vrrp[6832]: VRRP_Instance(VI_2) removing protocol VIPs. Aug 8 16:29:30 node2 Keepalived_healthcheckers[6831]: Netlink reflector reports IP 192.168.10.78 removed

#查看node2上的IP地址

[root@node2 ~]# ifconfig ens34: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.8 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)RX packets 61483 bytes 17487867 (16.6 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 29994 bytes 2672261 (2.5 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 323 bytes 20647 (20.1 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 323 bytes 20647 (20.1 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

可以发现第二个vrrp实例的VIP(192.168.10.78)已经从node2上移除。

#查看node1上的IP地址

[root@node1 ~]# ifconfig ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.6 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:f7:b3:4e txqueuelen 1000 (Ethernet)RX packets 46700 bytes 21146944 (20.1 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 44417 bytes 3794925 (3.6 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0ens33:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.77 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:f7:b3:4e txqueuelen 1000 (Ethernet)ens33:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.78 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:f7:b3:4e txqueuelen 1000 (Ethernet)lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 199 bytes 12722 (12.4 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 199 bytes 12722 (12.4 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

此时node1不仅是第一个vrrp实例(VIP为192.168.10.77)的主节点,还是第二个vrrp实例(VIP为192.168.10.78)的主节点。

(2) 在node1上杀死nginx进程,在node2上重新启动nginx进程,查看第一个vrrp实例的VIP(192.168.10.77)和第二个vrrp实例的VIP(192.168.10.78)是否会漂移至node2。

#先在node2上重启nginx进程,此时第二个vrrp实例的VIP(192.168.10.78)应该会重新漂移至node2上

[root@node2 ~]# systemctl restart nginx.service [root@node2 ~]# ss -tnl | grep :80 LISTEN 0 128 *:80 *:* [root@node2 ~]# ifconfig ens34: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.8 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)RX packets 62438 bytes 17551143 (16.7 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 30145 bytes 2688809 (2.5 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0ens34:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.78 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 331 bytes 21047 (20.5 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 331 bytes 21047 (20.5 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

果然在意料之中!

#在node1上杀死nginx进程,查看第一个vrrp实例的VIP(192.168.10.77)是否会重新漂移至node2上

[root@node1 ~]# killall nginx # 杀死nginx进程 [root@node1 ~]# tail /var/log/messages # 查看日志 Aug 8 16:36:41 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_2) Sending gratuitous ARPs on ens33 for 192.168.10.78 Aug 8 16:37:10 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_2) Received higher prio advert Aug 8 16:37:10 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_2) Entering BACKUP STATE Aug 8 16:37:10 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_2) removing protocol VIPs. Aug 8 16:37:10 node1 Keepalived_healthcheckers[7025]: Netlink reflector reports IP 192.168.10.78 removed Aug 8 16:38:44 node1 Keepalived_vrrp[7026]: VRRP_Script(chk_nginx) failed Aug 8 16:38:45 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_1) Received higher prio advert Aug 8 16:38:45 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_1) Entering BACKUP STATE Aug 8 16:38:45 node1 Keepalived_vrrp[7026]: VRRP_Instance(VI_1) removing protocol VIPs. Aug 8 16:38:45 node1 Keepalived_healthcheckers[7025]: Netlink reflector reports IP 192.168.10.77 removed [root@node1 ~]# ifconfig # 查看IP地址 ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.6 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:f7:b3:4e txqueuelen 1000 (Ethernet)RX packets 47288 bytes 21191119 (20.2 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 45193 bytes 3854623 (3.6 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 215 bytes 13522 (13.2 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 215 bytes 13522 (13.2 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

两个vrrp实例的VIP都不见了。

#重新查看node2上的IP地址

[root@node2 ~]# ifconfig ens34: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.8 netmask 255.255.255.0 broadcast 192.168.10.255ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)RX packets 62612 bytes 17562546 (16.7 MiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 30521 bytes 2712799 (2.5 MiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0ens34:0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.77 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)ens34:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500inet 192.168.10.78 netmask 255.255.255.255 broadcast 0.0.0.0ether 00:0c:29:ef:52:87 txqueuelen 1000 (Ethernet)lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536inet 127.0.0.1 netmask 255.0.0.0inet6 ::1 prefixlen 128 scopeid 0x10<host>loop txqueuelen 0 (Local Loopback)RX packets 335 bytes 21247 (20.7 KiB)RX errors 0 dropped 0 overruns 0 frame 0TX packets 335 bytes 21247 (20.7 KiB)TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

两个vrrp实例的VIP都漂移到node2去了。

当然,本配置示例中自定义的vrrp_script过于简单,此处提供一个vrrp_script监控脚本,仅供参考。

[root@node1 ~]# vim /etc/keepalived/vrrp_script.sh

#!/bin/bash

#

server=nginxrestart_server() {if ! systemctl restart $server &> /dev/null; thensleep 1return 0elseexit 0fi

}if ! killall -0 $server &> /dev/null; thenrestart_serverrestart_serverrestart_server[ $? -ne 0 ] && exit 1 || exit 0

fi

在keepalived.conf配置文件中需要指明脚本路径。

[root@node1 ~]# vim /etc/keepalived/keepalived.conf

...(其他省略)...

vrrp_script chk_nginx {script "/etc/keepalived/vrrp_script.sh"interval 2weight -5

}

...(其他省略)...

实验完成!

转载于:https://blog.51cto.com/xuweitao/1954608

配置Keepalived双实例高可用Nginx相关推荐

- RedHat 7配置keepalived+LVS实现高可用的Web负载均衡

上一篇博文中我们使用keepalived实现了haproxy的高可用,但keepalived问世之初却是为LVS而设计,与LVS高度整合,LVS与haproxy一样也是实现负载均衡,结合keepali ...

- MySQL集群(四)之keepalived实现mysql双主高可用

前面大家介绍了主从.主主复制以及他们的中间件mysql-proxy的使用,这一篇给大家介绍的是keepalived的搭建与使用! 一.keepalived简介 1.1.keepalived介绍 Kee ...

- 基于keepalived实现haproxy高可用的双主模型配置

Keepalived会主动检测web服务器,把有故障的服务器从系统中剔除,在服务器修复以后会重新加入到服务器群众,不影响服务器的正常工作 VRRP:虚拟路由冗余协议 它把一个虚拟路由器的责任动 ...

- 用 Keepalived+HAProxy 实现高可用负载均衡的配置方法

1. 概述 软件负载均衡技术是指可以为多个后端服务器节点提供前端IP流量分发调度服务的软件技术.Keepalived和HAProxy是众多软负载技术中的两种,其中Keepalived既可以实现负载均衡 ...

- Nginx+Keepalived实现站点高可用

2019独角兽企业重金招聘Python工程师标准>>> Nginx+Keepalived实现站点高可用 seanlook 2016-05-18 14:56:23 浏览2407 评论2 ...

- keepalived双实例配置

一.keepalived双实例 keepalived在master/backup工作模式下,会有一个主机处于闲置,所以keepalived可以使用vrrp的特性配置双master模式,使资源最大化. ...

- keepalived高可用+nginx负载均衡

keepalived高可用+nginx负载均衡 1.IP地址规划 hostname ip 说明 KN01 10.4.7.30 keepalived MASTER节点 nginx负载均衡器 KN02 1 ...

- keepalived架设简单高可用的nginx的web服务器 ----那些你不知道的秘密

keepalived架设简单高可用的nginx的web服务器----那些你不知道的秘密 如果負載均衡軟件不使用LVS的話,那麼keepalived的配置是相當的簡單的,只需要配置好MASTER和SLA ...

- Keepalived+Haproxy+Mysql(双主)高可用架构部署

Keepalived+Haproxy+Mysql(双主)高可用架构部署 一.背景 公司原部署的Mysql架构为keepalived+Mysql双主,但是这个架构有个缺陷是所有的读写请求都在一台机器上( ...

- 基于keepalived的mysql_【实用】基于keepalived的mysql双主高可用系统

原标题:[实用]基于keepalived的mysql双主高可用系统 mysql单节点存储时,系统出现故障时服务不可用.不能及时恢复的问题,因此实际使用时,一般都会使用mysql双机方案,使用keepa ...

最新文章

- Flask学习之路(一)--初识flask

- canvas.width和canvas.style.width区别以及应用

- 计算机网络——网络地址转换(NAT)

- ubuntu14.04 LTS 搜狗输入法安装和不能输入中文的解决方法

- C# VS2017 winForm 使tableLayoutPanel 不闪烁

- python程序狮_Python编程狮

- 【数学】求三角形的外接圆圆心

- 3D模型欣赏:美少女战士来袭!仙女水手水星请求出战!

- 二维非对心弹性碰撞的算法

- 嗖嗖移动大厅JAVA(免费源码分享)

- 惠普计算机进入安全模式,Windows10系统惠普电脑快速进入安全模式的方法

- VWware虚拟机如何设置固定的IP地址(详细步骤)

- C++开源库列表总结记录

- 安装Ubuntu VMware Workstation 不可恢复错误

- office2016 无法启动服务,原因可能是已被禁用或与其相关联的设备没有启动

- 记录Ok6410 sd 启动uboot

- 最近穷疯了只好吃馒头

- 计算机专业实践体会,计算机专业毕业实习心得体会

- 人工智能背景下的Office 365现状和发展趋势

- python中找最小值,使用循环python查找最小值

热门文章

- +0.5(加0.5)配合int()实现四舍五入

- 计算机网络ip地址博客,计算机网络中,这些IP地址你知道吗?

- 服务器为啥要搭建在2012系统,Windows Server2012R2怎么配置为DNS服务器

- android环境混合app开发,cordova混合App开发:Cordova+Vue实现Android (环境搭建)

- vue中input多选_vue.js动态添加删除文本框input、下拉框select、单选radio、多选checkbox的方案。...

- linux该专接本还是工作_先专接本还是先工作?

- Maven发布工程到公共库

- Android系统源码分析--Context

- rails debug

- maven profile参数动态打入