FastDFS点滴记录

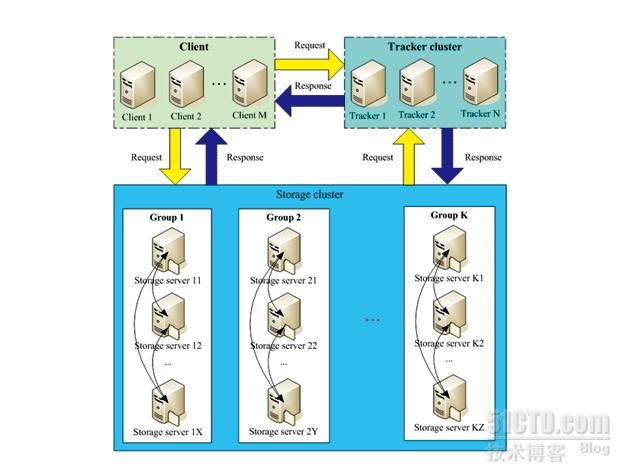

fastdfs是一个开源的,高性能的的分布式文件系统,他主要的功能包括:文件存储,同步和访问,设计基于高可用和负载均衡,fastfd非常适用于基于文件服务的站点,例如图片分享和视频分享网站

fastfds有两个角色:跟踪服务和存储服务,跟踪服务控制,调度文件以负载均衡的方式访问;存储服务包括:文件存储,文件同步,提供文件访问接口,同时以key value的方式管理文件的元数据

跟踪和存储服务可以由1台或者多台服务器组成,同时可以动态的添加,删除跟踪和存储服务而不会对在线的服务产生影响,在集群中,tracker服务是对等的

存储系统由一个或多个卷组成,卷与卷之间的文件是相互独立的,所有卷的文件容量累加就是整个存储系统中的文件容量。一个卷可以由一台或多台存储服务器组成,一个卷下的存储服务器中的文件都是相同的,卷中的多台存储服务器起到了冗余备份和负载均衡的作用。在卷中增加服务器时,同步已有的文件由系统自动完成,同步完成后,系统自动将新增服务器切换到线上提供服务。当存储空间不足或即将耗尽时,可以动态添加卷。只需要增加一台或多台服务器,并将它们配置为一个新的卷,这样就扩大了存储系统的容量。

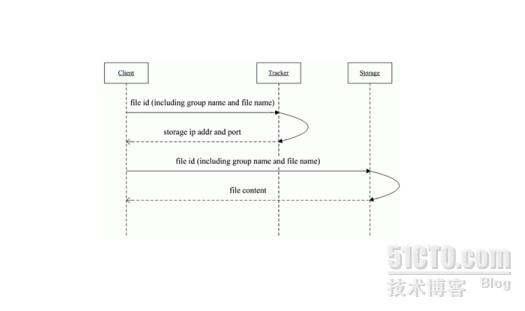

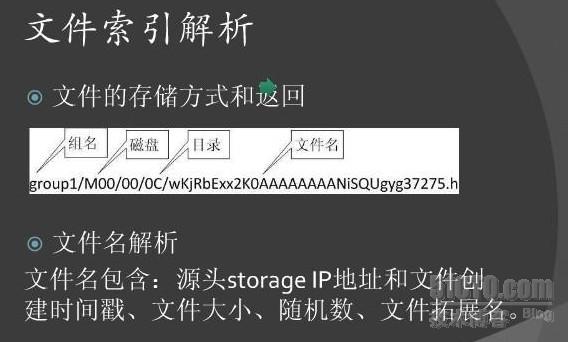

下面几张图可以清楚的说明fastfds的架构和文件上传和下载流程等:

下面将介绍下fastdfs的部署过程

tracker服务器:192.168.0.1/24

storage服务器:192.168.0.2/24

1,编译安装

wget https://github.com/downloads/libevent/libevent/libevent-1.4.14b-stable.tar.gz

wget https://fastdfs.googlecode.com/files/FastDFS_v4.06.tar.gz

1)安装fastdfs所需的libevent库

tar -zxf libevent-1.4.14b-stable.tar.gz

cd libevent-1.4.14b-stable

./configure --prefix=/usr/local/libevent

make && make install

2)安装fastdfs

tar -zxf FastDFS_v4.06.tar.gz

cd FastDFS

./make.sh C_INCLUDE_PATH=/usr/local/libevent/include LIBRARY_PATH=/usr/local/libevent/lib

./make.sh install

echo '/usr/local/libevent/include/' >> /etc/ld.so.conf

echo '/usr/local/libevent/lib/' >> /etc/ld.so.conf

ldconfig

这里的Tracker和Storage安装方法一样,仅存在配置差异,所以storage的安装此处省略,只说明配置文件

3)配置Tracker

3-1)Tracker配置

安装完毕后,会自动在/etc/fdfs/生成配置文件,这里只配置跟Tracker相关的配置文件,tracker.conf,具体如下:

# is this config file disabled

# false for enabled

# true for disabled

disabled=false

# bind an address of this host

# empty for bind all addresses of this host

bind_addr=

# the tracker server port

port=22122

# connect timeout in seconds

# default value is 30s

connect_timeout=30

# network timeout in seconds

# default value is 30s

network_timeout=60

# the base path to store data and log files

base_path=/home/storage1/fastdfs

# max concurrent connections this server supported

max_connections=256

# work thread count, should <= max_connections

# default value is 4

# since V2.00

work_threads=4

# the method of selecting group to upload files

# 0: round robin

# 1: specify group

# 2: load balance, select the max free space group to upload file

store_lookup=2

# which group to upload file

# when store_lookup set to 1, must set store_group to the group name

store_group=group2

# which storage server to upload file

# 0: round robin (default)

# 1: the first server order by ip address

# 2: the first server order by priority (the minimal)

store_server=0

# which path(means disk or mount point) of the storage server to upload file

# 0: round robin

# 2: load balance, select the max free space path to upload file

store_path=0

# which storage server to download file

# 0: round robin (default)

# 1: the source storage server which the current file uploaded to

download_server=0

# reserved storage space for system or other applications.

# if the free(available) space of any stoarge server in

# a group <= reserved_storage_space,

# no file can be uploaded to this group.

# bytes unit can be one of follows:

### G or g for gigabyte(GB)

### M or m for megabyte(MB)

### K or k for kilobyte(KB)

### no unit for byte(B)

### XX.XX% as ratio such as reserved_storage_space = 10%

reserved_storage_space = 10%

#standard log level as syslog, case insensitive, value list:

### emerg for emergency

### alert

### crit for critical

### error

### warn for warning

### notice

### info

### debug

log_level=info

#unix group name to run this program,

#not set (empty) means run by the group of current user

run_by_group=

#unix username to run this program,

#not set (empty) means run by current user

run_by_user=

# allow_hosts can ocur more than once, host can be hostname or ip address,

# "*" means match all ip addresses, can use range like this: 10.0.1.[1-15,20] or

# host[01-08,20-25].domain.com, for example:

# allow_hosts=10.0.1.[1-15,20]

# allow_hosts=host[01-08,20-25].domain.com

allow_hosts=*

# sync log buff to disk every interval seconds

# default value is 10 seconds

sync_log_buff_interval = 10

# check storage server alive interval seconds

check_active_interval = 120

# thread stack size, should >= 64KB

# default value is 64KB

thread_stack_size = 64KB

# auto adjust when the ip address of the storage server changed

# default value is true

storage_ip_changed_auto_adjust = true

# storage sync file max delay seconds

# default value is 86400 seconds (one day)

# since V2.00

storage_sync_file_max_delay = 86400

# the max time of storage sync a file

# default value is 300 seconds

# since V2.00

storage_sync_file_max_time = 300

# if use a trunk file to store several small files

# default value is false

# since V3.00

use_trunk_file = false

# the min slot size, should <= 4KB

# default value is 256 bytes

# since V3.00

slot_min_size = 256

# the max slot size, should > slot_min_size

# store the upload file to trunk file when it's size <= this value

# default value is 16MB

# since V3.00

slot_max_size = 16MB

# the trunk file size, should >= 4MB

# default value is 64MB

# since V3.00

trunk_file_size = 64MB

# if create trunk file advancely

# default value is false

# since V3.06

trunk_create_file_advance = false

# the time base to create trunk file

# the time format: HH:MM

# default value is 02:00

# since V3.06

trunk_create_file_time_base = 02:00

# the interval of create trunk file, unit: second

# default value is 38400 (one day)

# since V3.06

trunk_create_file_interval = 86400

# the threshold to create trunk file

# when the free trunk file size less than the threshold, will create

# the trunk files

# default value is 0

# since V3.06

trunk_create_file_space_threshold = 20G

# if check trunk space occupying when loading trunk free spaces

# the occupied spaces will be ignored

# default value is false

# since V3.09

# NOTICE: set this parameter to true will slow the loading of trunk spaces

# when startup. you should set this parameter to true when neccessary.

trunk_init_check_occupying = false

# if ignore storage_trunk.dat, reload from trunk binlog

# default value is false

# since V3.10

# set to true once for version upgrade when your version less than V3.10

trunk_init_reload_from_binlog = false

# if use storage ID instead of IP address

# default value is false

# since V4.00

use_storage_id = false

# specify storage ids filename, can use relative or absolute path

# since V4.00

storage_ids_filename = storage_ids.conf

# id type of the storage server in the filename, values are:

## ip: the ip address of the storage server

## id: the server id of the storage server

# this paramter is valid only when use_storage_id set to true

# default value is ip

# since V4.03

id_type_in_filename = ip

# if store slave file use symbol link

# default value is false

# since V4.01

store_slave_file_use_link = false

# if rotate the error log every day

# default value is false

# since V4.02

rotate_error_log = false

# rotate error log time base, time format: Hour:Minute

# Hour from 0 to 23, Minute from 0 to 59

# default value is 00:00

# since V4.02

error_log_rotate_time=00:00

# rotate error log when the log file exceeds this size

# 0 means never rotates log file by log file size

# default value is 0

# since V4.02

rotate_error_log_size = 0

# if use connection pool

# default value is false

# since V4.05

use_connection_pool = false

# connections whose the idle time exceeds this time will be closed

# unit: second

# default value is 3600

# since V4.05

connection_pool_max_idle_time = 3600

# HTTP port on this tracker server

http.server_port=8080

# check storage HTTP server alive interval seconds

# <= 0 for never check

# default value is 30

http.check_alive_interval=30

# check storage HTTP server alive type, values are:

# tcp : connect to the storge server with HTTP port only,

# do not request and get response

# http: storage check alive url must return http status 200

# default value is tcp

http.check_alive_type=tcp

# check storage HTTP server alive uri/url

# NOTE: storage embed HTTP server support uri: /status.html

http.check_alive_uri=/status.html

配置完后,mkdir -p /home/storage1/fastdfs

启动fastdfs:/usr/local/bin/fdfst_tracker /etc/fdfs/tracker.conf

netstat -ntpl |grep fdfs

tcp 0 0 192.168.0.1:23000 0.0.0.0:* LISTEN

14451/fdfs_storaged

OK,启动完毕,下面来配置storage(这里storage配置文件里,group_name默认是group1,可根据自己需求修改活着不修改)

vi /etc/fdfs/storage.conf

# is this config file disabled

# false for enabled

# true for disabled

disabled=false

# the name of the group this storage server belongs to

group_name=g1

# bind an address of this host

# empty for bind all addresses of this host

bind_addr=

# if bind an address of this host when connect to other servers

# (this storage server as a client)

# true for binding the address configed by above parameter: "bind_addr"

# false for binding any address of this host

client_bind=true

# the storage server port

port=23000

# connect timeout in seconds

# default value is 30s

connect_timeout=30

# network timeout in seconds

# default value is 30s

network_timeout=60

# heart beat interval in seconds

heart_beat_interval=30

# disk usage report interval in seconds

stat_report_interval=60

# the base path to store data and log files

base_path=/home/storage1/fastdfs

# max concurrent connections the server supported

# default value is 256

# more max_connections means more memory will be used

max_connections=256

# the buff size to recv / send data

# this parameter must more than 8KB

# default value is 64KB

# since V2.00

buff_size = 256KB

# work thread count, should <= max_connections

# work thread deal network io

# default value is 4

# since V2.00

work_threads=4

# if disk read / write separated

## false for mixed read and write

## true for separated read and write

# default value is true

# since V2.00

disk_rw_separated = true

# disk reader thread count per store base path

# for mixed read / write, this parameter can be 0

# default value is 1

# since V2.00

disk_reader_threads = 1

# disk writer thread count per store base path

# for mixed read / write, this parameter can be 0

# default value is 1

# since V2.00

disk_writer_threads = 1

# when no entry to sync, try read binlog again after X milliseconds

# must > 0, default value is 200ms

sync_wait_msec=50

# after sync a file, usleep milliseconds

# 0 for sync successively (never call usleep)

sync_interval=0

# storage sync start time of a day, time format: Hour:Minute

# Hour from 0 to 23, Minute from 0 to 59

sync_start_time=00:00

# storage sync end time of a day, time format: Hour:Minute

# Hour from 0 to 23, Minute from 0 to 59

sync_end_time=23:59

# write to the mark file after sync N files

# default value is 500

write_mark_file_freq=500

# path(disk or mount point) count, default value is 1

store_path_count=1

# store_path#, based 0, if store_path0 not exists, it's value is base_path

# the paths must be exist

store_path0=/home/storage1/fastdfs

#store_path1=/home/yuqing/fastdfs2

# subdir_count * subdir_count directories will be auto created under each

# store_path (disk), value can be 1 to 256, default value is 256

subdir_count_per_path=256

# tracker_server can ocur more than once, and tracker_server format is

# "host:port", host can be hostname or ip address

tracker_server=192.168.0.1:22122

#standard log level as syslog, case insensitive, value list:

### emerg for emergency

### alert

### crit for critical

### error

### warn for warning

### notice

### info

### debug

log_level=info

#unix group name to run this program,

#not set (empty) means run by the group of current user

run_by_group=

#unix username to run this program,

#not set (empty) means run by current user

run_by_user=

# allow_hosts can ocur more than once, host can be hostname or ip address,

# "*" means match all ip addresses, can use range like this: 10.0.1.[1-15,20] or

# host[01-08,20-25].domain.com, for example:

# allow_hosts=10.0.1.[1-15,20]

# allow_hosts=host[01-08,20-25].domain.com

allow_hosts=*

# the mode of the files distributed to the data path

# 0: round robin(default)

# 1: random, distributted by hash code

file_distribute_path_mode=0

# valid when file_distribute_to_path is set to 0 (round robin),

# when the written file count reaches this number, then rotate to next path

# default value is 100

file_distribute_rotate_count=100

# call fsync to disk when write big file

# 0: never call fsync

# other: call fsync when written bytes >= this bytes

# default value is 0 (never call fsync)

fsync_after_written_bytes=0

# sync log buff to disk every interval seconds

# must > 0, default value is 10 seconds

sync_log_buff_interval=10

# sync binlog buff / cache to disk every interval seconds

# default value is 60 seconds

sync_binlog_buff_interval=10

# sync storage stat info to disk every interval seconds

# default value is 300 seconds

sync_stat_file_interval=300

# thread stack size, should >= 512KB

# default value is 512KB

thread_stack_size=512KB

# the priority as a source server for uploading file.

# the lower this value, the higher its uploading priority.

# default value is 10

upload_priority=10

# the NIC alias prefix, such as eth in Linux, you can see it by ifconfig -a

# multi aliases split by comma. empty value means auto set by OS type

# default values is empty

if_alias_prefix=

# if check file duplicate, when set to true, use FastDHT to store file indexes

# 1 or yes: need check

# 0 or no: do not check

# default value is 0

check_file_duplicate=0

# file signature method for check file duplicate

## hash: four 32 bits hash code

## md5: MD5 signature

# default value is hash

# since V4.01

file_signature_method=hash

# namespace for storing file indexes (key-value pairs)

# this item must be set when check_file_duplicate is true / on

key_namespace=FastDFS

# set keep_alive to 1 to enable persistent connection with FastDHT servers

# default value is 0 (short connection)

keep_alive=0

# you can use "#include filename" (not include double quotes) directive to

# load FastDHT server list, when the filename is a relative path such as

# pure filename, the base path is the base path of current/this config file.

# must set FastDHT server list when check_file_duplicate is true / on

# please see INSTALL of FastDHT for detail

##include /home/yuqing/fastdht/conf/fdht_servers.conf

# if log to access log

# default value is false

# since V4.00

use_access_log = false

# if rotate the access log every day

# default value is false

# since V4.00

rotate_access_log = false

# rotate access log time base, time format: Hour:Minute

# Hour from 0 to 23, Minute from 0 to 59

# default value is 00:00

# since V4.00

access_log_rotate_time=00:00

# if rotate the error log every day

# default value is false

# since V4.02

rotate_error_log = false

# rotate error log time base, time format: Hour:Minute

# Hour from 0 to 23, Minute from 0 to 59

# default value is 00:00

# since V4.02

error_log_rotate_time=00:00

# rotate access log when the log file exceeds this size

# 0 means never rotates log file by log file size

# default value is 0

# since V4.02

rotate_access_log_size = 0

# rotate error log when the log file exceeds this size

# 0 means never rotates log file by log file size

# default value is 0

# since V4.02

rotate_error_log_size = 0

# if skip the invalid record when sync file

# default value is false

# since V4.02

file_sync_skip_invalid_record=false

# if use connection pool

# default value is false

# since V4.05

use_connection_pool = false

# connections whose the idle time exceeds this time will be closed

# unit: second

# default value is 3600

# since V4.05

connection_pool_max_idle_time = 3600

# use the ip address of this storage server if domain_name is empty,

# else this domain name will ocur in the url redirected by the tracker server

http.domain_name=

# the port of the web server on this storage server

http.server_port=80

mkdir -p /home/storage1/fastdfs

启动storage:/usr/local/bin/fdfs_storage /etc/fdfs/storage.conf

好了,这里就全部配置完成了,由于fastdfs最新版本取消了内置的http服务,我们需要从直接从storage服务get图片,因此还需要给storage安装一个http服务,也可以把相关p_w_picpath服务直接部署在stroage上,nginx,apache都可以,此处以nginx为例:

转载于:https://blog.51cto.com/nanow/1204697

FastDFS点滴记录相关推荐

- 关于TVM的点滴记录

关于TVM的点滴记录

- 软件开发心得点滴记录

软件开发心得点滴记录 一见 创建日期:2013/6/27 1. 前言 自从2002年大学毕业后一直沉浸于软件开发之路,平时喜欢思考和归纳,时常会产生一点心得和想法,回想起来是一笔宝贵的财富,只可惜陆陆 ...

- 深度学习(四十)caffe使用点滴记录

caffe使用点滴记录-持续更新 一.caffe 创建python 层 因为caffe底层是用c++编写的,所以我们有的时候想要添加某一个最新文献出来的新算法,正常的方法是直接编写c++网络层,然而这 ...

- python学习点滴记录-Day10-线程

多线程 协程 io模型 并发编程需要掌握的点: 1 生产者消费者模型2 进程池线程池3 回调函数4 GIL全局解释器锁 线程 理论部分 (摘自egon老师博客) 一.定义: 在传统操作系统中,每个进程 ...

- 点滴记录,与技术无关

emmm....不知不觉已经快一年没更新了, 看了看上次的记录,那个后台已经被扼杀在胚胎期了. 距离上次更新过去了10个月,这10个月之间买了房子,也装修好了,即将搬家,年后三月初也换了工作,新工作两 ...

- 生活点滴记录-- 两点一线

呵呵,各位朋友好,在此记录下最近一段时间的生活内容. 早上 8:30 上班 --- 上午设计android框架代码 -- 下午编码 -- 6:00 准时(准时下班真爽呀,可以早早回家吃饭) -- 7 ...

- DW-CHEN的Java点滴记录JavaWeb之HTTP协议/Servlet/Cookie/Session/JSP/EL/JSTL/Filter/Listener

JavaEE规范 JavaEE(Java Enterprise Edition):Java企业版,早期叫J2EE(J2EE的版本从1.0到1.4结束):现在Java版本从JavaEE 5开始 Java ...

- 招商银行银企直联开发点滴记录

1. 概述 最近工作中用到了招商银行的银企直联系统,作为资金支出渠道.招行系统提供两种方式与企业财务系统对接:一种是前置机式:一种是嵌入式.而"嵌入式直联方式仅作向下兼容支持,新增客户请使用 ...

- struts2点滴记录

1.s:textfield 赋值方法 <s:textfield name="Tname" value="%{#session.Teacher.name}" ...

最新文章

- React和Jquery比较

- ImageView可直接调用的,根据URL设置图片的工具类

- OpenGL blending 混合的实例

- Android 沙箱开源,Android Sandbox(沙箱)开源工具介绍

- 如何截获打印机文件_打印、复印还不会,如何在办公室里混?全程详细教学

- python zipfile_python zipfile模块

- 接待员如何向客人upsell_如何提升自我做好客户服务与管理?

- 20200507:力扣151周赛下

- C# 根据地址调用 Google Map 服务得到经纬度

- 记于开学两个星期...十九岁快乐!

- matlab 振动,Matlab振动程序-代码作业

- 台式计算机前面插耳机没声音,Win10台式机机箱前置耳机插孔没声音如何修复

- k3 cloud api java_调用K3Cloud webapi

- 舌尖上的阳朔,除米粉之外的桂菜诱惑

- 海洋测绘各种数据考点

- android倒数计时器,Android倒计时(分钟)

- HTML爱心动画小玩意儿

- 【BUG】DLL load failed while importing pyopenpose: 找不到指定的模块

- Java数据结构与算法———(10)单链表应用实例,找到单链表中倒数第K个节点

- 戴好这六顶帽子的项目经理,无论项目团队还是个人成长都受益终生

热门文章

- AndroidStudio_android使用自己封装的消息队列处理问题_封装LinkedQueue---Android原生开发工作笔记242

- C++_二维数组_定义方式_数组名称的作用_案例考试成绩统计---C++语言工作笔记021

- axios创建实例对象发送ajax请求_解决一个网页请求多个服务器场景---axios工作笔记009

- 微服务升级_SpringCloud Alibaba工作笔记0017---Nacos之服务消费者注册和负载

- K8S_Google工作笔记0011---通过二进制方式_为APIServer生成自签证书

- Netty工作笔记0063---WebSocket长连接开发2

- java零碎要点010---Java实现office文档与pdf文档的在线预览功能

- 使用plt *.log

- Ipimage 转mat

- XGBoost原理及在Python中使用XGBoost