kubernetes集群配置dns服务

本文将在前文的基础上介绍在kubernetes集群环境中配置dns服务,在k8s集群中,pod的生命周期是短暂的,pod重启后ip地址会产生变化,对于应用程序来说这是不可接受的,为解决这个问题,K8S集群巧妙的引入的dns服务来实现服务的发现,在k8s集群中dns总共需要使用4个组件,各组件分工如下:

etcd:DNS存储

kube2sky:将Kubernetes Master中的service(服务)注册到etcd。

skyDNS:提供DNS域名解析服务。

healthz:提供对skydns服务的健康检查。

一、下载相关镜像文件,并纳入本地仓库统一管理

# docker pull docker.io/elcolio/etcd

# docker pull docker.io/port/kubernetes-kube2sky

# docker pull docker.io/skynetservices/skydns

# docker pull docker.io/wu1boy/healthz# docker tag docker.io/elcolio/etcd registry.fjhb.cn/etcd

# docker tag docker.io/port/kubernetes-kube2sky registry.fjhb.cn/kubernetes-kube2sky

# docker tag docker.io/skynetservices/skydns registry.fjhb.cn/skydns

# docker tag docker.io/wu1boy/healthz registry.fjhb.cn/healthz# docker push registry.fjhb.cn/etcd

# docker push registry.fjhb.cn/kubernetes-kube2sky

# docker push registry.fjhb.cn/skydns

# docker push registry.fjhb.cn/healthz

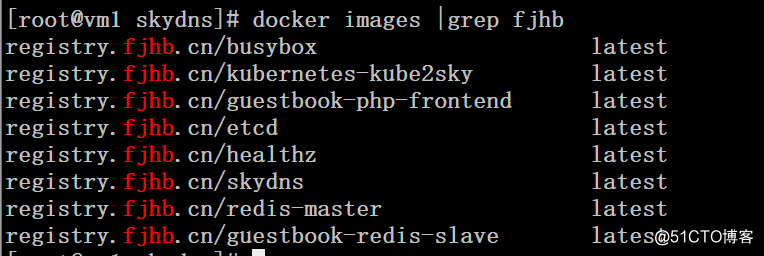

# docker images |grep fjhb

二、通过rc文件创建pod

这里面一个pod包含了4个组件,一个组件运行在一个docker容器中

# cat skydns-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:name: kube-dnsnamespace: defaultlabels:k8s-app: kube-dnsversion: v12kubernetes.io/cluster-service: "true"

spec:replicas: 1selector:k8s-app: kube-dnsversion: v12template:metadata:labels:k8s-app: kube-dnsversion: v12kubernetes.io/cluster-service: "true"spec:containers:- name: etcdimage: registry.fjhb.cn/etcd resources:limits:cpu: 100mmemory: 50Mirequests:cpu: 100mmemory: 50Micommand:- /bin/etcd- --data-dir- /tmp/data- --listen-client-urls- http://127.0.0.1:2379,http://127.0.0.1:4001- --advertise-client-urls- http://127.0.0.1:2379,http://127.0.0.1:4001- --initial-cluster-token- skydns-etcdvolumeMounts:- name: etcd-storagemountPath: /tmp/data- name: kube2skyimage: registry.fjhb.cn/kubernetes-kube2skyresources:limits:cpu: 100mmemory: 50Mirequests:cpu: 100mmemory: 50Miargs:- -kube_master_url=http://192.168.115.5:8080- -domain=cluster.local- name: skydnsimage: registry.fjhb.cn/skydns resources:limits:cpu: 100mmemory: 50Mirequests:cpu: 100mmemory: 50Miargs:- -machines=http://127.0.0.1:4001- -addr=0.0.0.0:53- -ns-rotate=false- -domain=cluster.localports:- containerPort: 53name: dnsprotocol: UDP- containerPort: 53name: dns-tcpprotocol: TCP- name: healthzimage: registry.fjhb.cn/healthzresources:limits:cpu: 10mmemory: 20Mirequests:cpu: 10mmemory: 20Miargs:- -cmd=nslookup kubernetes.default.svc.cluster.local 127.0.0.1 >/dev/null- -port=8080ports:- containerPort: 8080protocol: TCPvolumes:- name: etcd-storageemptyDir: {}dnsPolicy: Default三、通过srv文件创建service

# cat skydns-svc.yaml

apiVersion: v1

kind: Service

metadata:name: kube-dnsnamespace: defaultlabels:k8s-app: kube-dnskubernetes.io/cluster-service: "true"kubernetes.io/name: "KubeDNS"

spec:selector:k8s-app: kube-dnsclusterIP: 10.254.16.254ports:- name: dnsport: 53protocol: UDP- name: dns-tcpport: 53protocol: TCP# kubectl create -f skydns-rc.yaml

# kubectl create -f skydns-svc.yaml

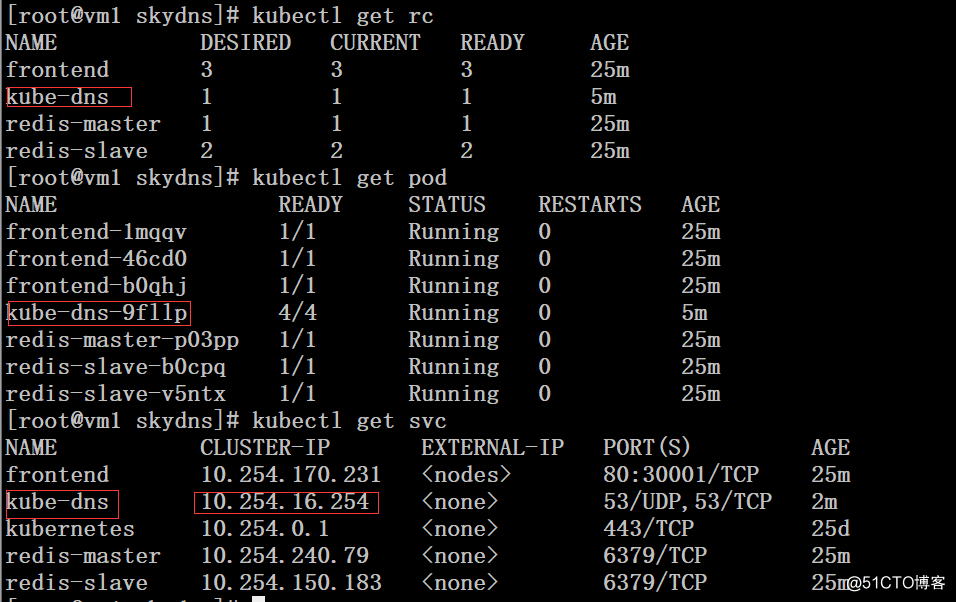

# kubectl get rc

# kubectl get pod

# kubectl get svc

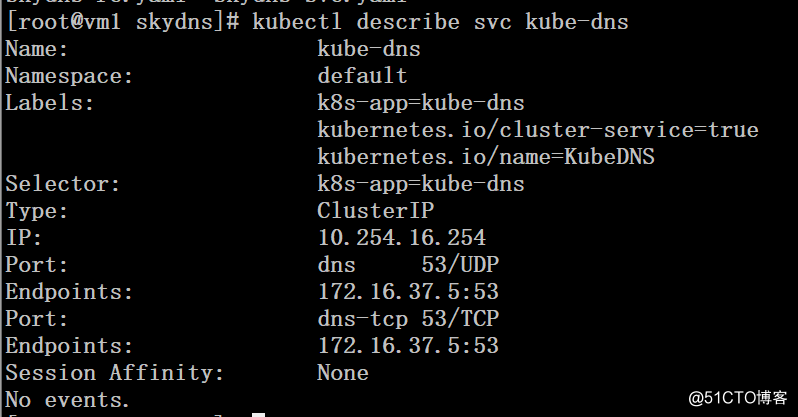

# kubectl describe svc kube-dns

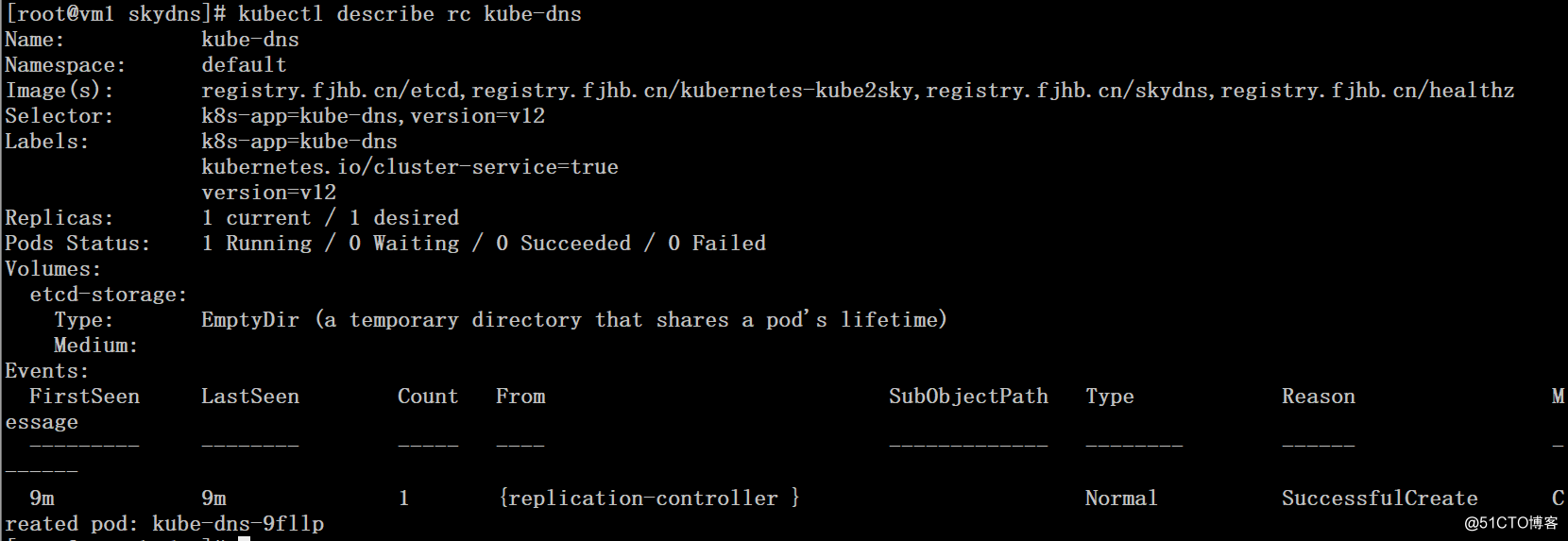

# kubectl describe rc kube-dns

# kubectl describe pod kube-dns-9fllp

Name: kube-dns-9fllp

Namespace: default

Node: 192.168.115.6/192.168.115.6

Start Time: Tue, 23 Jan 2018 10:55:19 -0500

Labels: k8s-app=kube-dnskubernetes.io/cluster-service=trueversion=v12

Status: Running

IP: 172.16.37.5

Controllers: ReplicationController/kube-dns

Containers:etcd:Container ID: docker://62ad76bfaca1797c5f43b0e9eebc04074169fce4cc15ef3ffc4cd19ffa9c8c19Image: registry.fjhb.cn/etcdImage ID: docker-pullable://docker.io/elcolio/etcd@sha256:3b4dcd35a7eefea9ce2970c81dcdf0d0801a778d117735ee1d883222de8bbd9fPort:Command:/bin/etcd--data-dir/tmp/data--listen-client-urlshttp://127.0.0.1:2379,http://127.0.0.1:4001--advertise-client-urlshttp://127.0.0.1:2379,http://127.0.0.1:4001--initial-cluster-tokenskydns-etcdLimits:cpu: 100mmemory: 50MiRequests:cpu: 100mmemory: 50MiState: RunningStarted: Tue, 23 Jan 2018 10:55:23 -0500Ready: TrueRestart Count: 0Volume Mounts:/tmp/data from etcd-storage (rw)/var/run/secrets/kubernetes.io/serviceaccount from default-token-6pddn (ro)Environment Variables: <none>kube2sky:Container ID: docker://6b0bc6e8dce83e3eee5c7e654fbaca693730623fb7936a1fd9d73de1a1dd8152Image: registry.fjhb.cn/kubernetes-kube2skyImage ID: docker-pullable://docker.io/port/kubernetes-kube2sky@sha256:0230d3fbb0aeb4ddcf903811441cf2911769dbe317a55187f58ca84c95107ff5Port:Args:-kube_master_url=http://192.168.115.5:8080-domain=cluster.localLimits:cpu: 100mmemory: 50MiRequests:cpu: 100mmemory: 50MiState: RunningStarted: Tue, 23 Jan 2018 10:55:25 -0500Ready: TrueRestart Count: 0Volume Mounts:/var/run/secrets/kubernetes.io/serviceaccount from default-token-6pddn (ro)Environment Variables: <none>skydns:Container ID: docker://ebc2aaaa54e2f922e370e454ec537665d813c69d37a21e3afd908e6dad056627Image: registry.fjhb.cn/skydnsImage ID: docker-pullable://docker.io/skynetservices/skydns@sha256:6f8a9cff0b946574bb59804016d3aacebc637581bace452db6a7515fa2df79eePorts: 53/UDP, 53/TCPArgs:-machines=http://127.0.0.1:4001-addr=0.0.0.0:53-ns-rotate=false-domain=cluster.localLimits:cpu: 100mmemory: 50MiRequests:cpu: 100mmemory: 50MiState: RunningStarted: Tue, 23 Jan 2018 10:55:27 -0500Ready: TrueRestart Count: 0Volume Mounts:/var/run/secrets/kubernetes.io/serviceaccount from default-token-6pddn (ro)Environment Variables: <none>healthz:Container ID: docker://f1de1189fa6b51281d414d7a739b86494b04c8271dc6bb5f20c51fac15ec9601Image: registry.fjhb.cn/healthzImage ID: docker-pullable://docker.io/wu1boy/healthz@sha256:d6690c0a8cc4f810a5e691b6a9b8b035192cb967cb10e91c74824bb4c8eea796Port: 8080/TCPArgs:-cmd=nslookup kubernetes.default.svc.cluster.local 127.0.0.1 >/dev/null-port=8080Limits:cpu: 10mmemory: 20MiRequests:cpu: 10mmemory: 20MiState: RunningStarted: Tue, 23 Jan 2018 10:55:29 -0500Ready: TrueRestart Count: 0Volume Mounts:/var/run/secrets/kubernetes.io/serviceaccount from default-token-6pddn (ro)Environment Variables: <none>

Conditions:Type StatusInitialized True Ready True PodScheduled True

Volumes:etcd-storage:Type: EmptyDir (a temporary directory that shares a pod's lifetime)Medium:default-token-6pddn:Type: Secret (a volume populated by a Secret)SecretName: default-token-6pddn

QoS Class: Guaranteed

Tolerations: <none>

Events:FirstSeen LastSeen Count From SubObjectPath Type Reason Message--------- -------- ----- ---- ------------- -------- ------ -------7m 7m 1 {default-scheduler } Normal Scheduled Successfully assigned kube-dns-9fllp to 192.168.115.67m 7m 1 {kubelet 192.168.115.6} spec.containers{etcd} Normal Pulling pulling image "registry.fjhb.cn/etcd"7m 7m 1 {kubelet 192.168.115.6} spec.containers{etcd} Normal Pulled Successfully pulled image "registry.fjhb.cn/etcd"7m 7m 1 {kubelet 192.168.115.6} spec.containers{etcd} Normal Created Created container with docker id 62ad76bfaca1; Security:[seccomp=unconfined]7m 7m 1 {kubelet 192.168.115.6} spec.containers{kube2sky} Normal Pulled Successfully pulled image "registry.fjhb.cn/kubernetes-kube2sky"7m 7m 1 {kubelet 192.168.115.6} spec.containers{etcd} Normal Started Started container with docker id 62ad76bfaca17m 7m 1 {kubelet 192.168.115.6} spec.containers{kube2sky} Normal Pulling pulling image "registry.fjhb.cn/kubernetes-kube2sky"7m 7m 1 {kubelet 192.168.115.6} spec.containers{kube2sky} Normal Created Created container with docker id 6b0bc6e8dce8; Security:[seccomp=unconfined]7m 7m 1 {kubelet 192.168.115.6} spec.containers{skydns} Normal Pulled Successfully pulled image "registry.fjhb.cn/skydns"7m 7m 1 {kubelet 192.168.115.6} spec.containers{skydns} Normal Pulling pulling image "registry.fjhb.cn/skydns"7m 7m 1 {kubelet 192.168.115.6} spec.containers{kube2sky} Normal Started Started container with docker id 6b0bc6e8dce87m 7m 1 {kubelet 192.168.115.6} spec.containers{skydns} Normal Created Created container with docker id ebc2aaaa54e2; Security:[seccomp=unconfined]7m 7m 1 {kubelet 192.168.115.6} spec.containers{skydns} Normal Started Started container with docker id ebc2aaaa54e27m 7m 1 {kubelet 192.168.115.6} spec.containers{healthz} Normal Pulling pulling image "registry.fjhb.cn/healthz"7m 7m 1 {kubelet 192.168.115.6} spec.containers{healthz} Normal Pulled Successfully pulled image "registry.fjhb.cn/healthz"7m 7m 1 {kubelet 192.168.115.6} spec.containers{healthz} Normal Created Created container with docker id f1de1189fa6b; Security:[seccomp=unconfined]7m 7m 1 {kubelet 192.168.115.6} spec.containers{healthz} Normal Started Started container with docker id f1de1189fa6b四、修改kubelet配置文件并重启服务

注意:

--cluster-dns参数要和前面svc文件中的clusterIP参数一致

--cluster-domain参数要和前面rc文件中的-domain参数一致

集群内所有的kubelet节点都需要修改

# grep 'KUBELET_ADDRESS' /etc/kubernetes/kubelet

KUBELET_ADDRESS="--address=192.168.115.5 --cluster-dns=10.254.16.254 --cluster-domain=cluster.local"

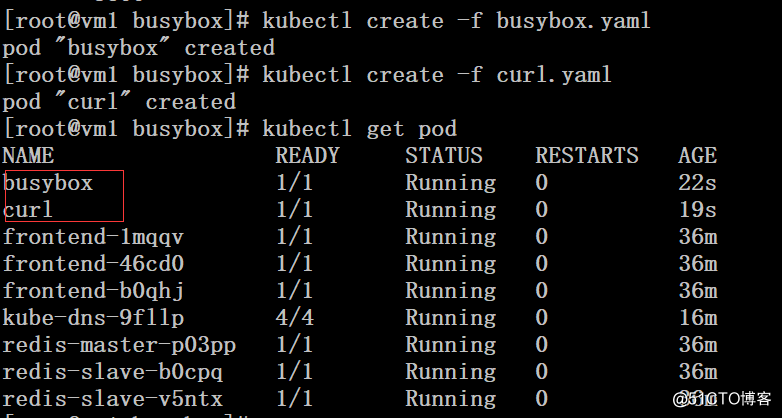

# systemctl restart kubelet五、运行一个busybox和curl进行测试

# cat busybox.yaml

apiVersion: v1

kind: Pod

metadata:name: busybox

spec:containers:- name: busyboximage: docker.io/busyboxcommand:- sleep

- "3600"# cat curl.yaml

apiVersion: v1

kind: Pod

metadata:name: curl

spec:containers:- name: curlimage: docker.io/webwurst/curl-utilscommand:- sleep

- "3600"# kubectl create -f busybox.yaml

# kubectl create -f curl.yaml

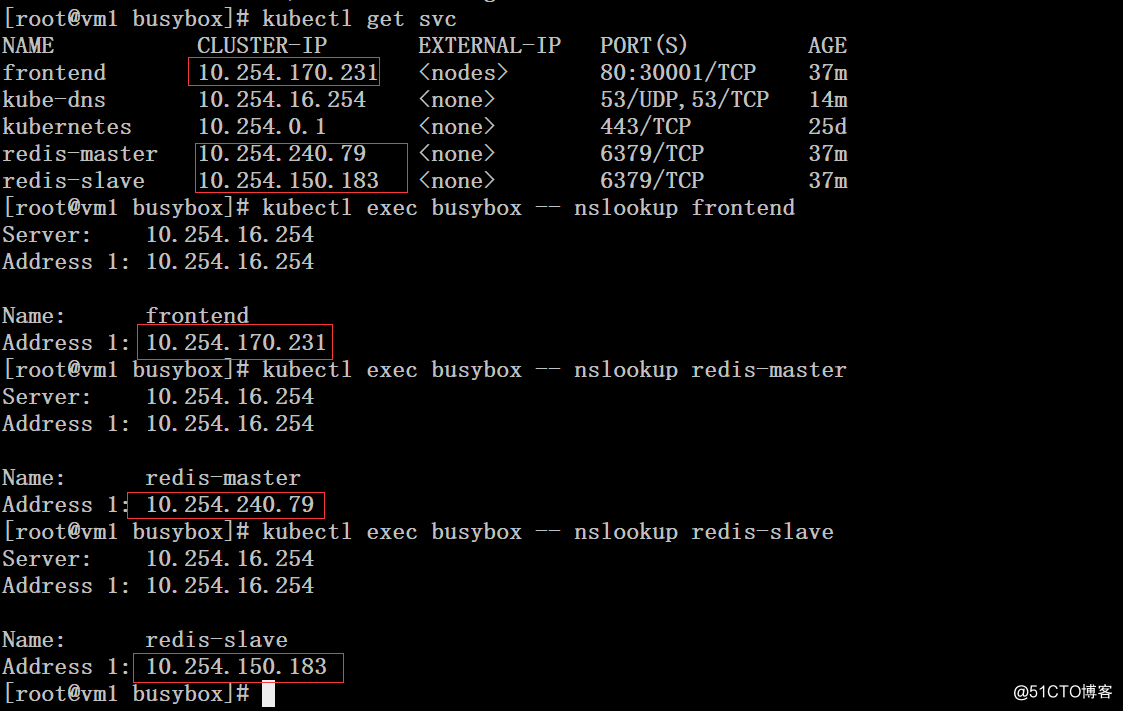

通过busybox容器对kubernetes的service进行解析,发现service被自动解析成了对应的集群ip地址,而并不是172.16网段的docker地址

# kubectl get svc

# kubectl exec busybox -- nslookup frontend

# kubectl exec busybox -- nslookup redis-master

# kubectl exec busybox -- nslookup redis-slave

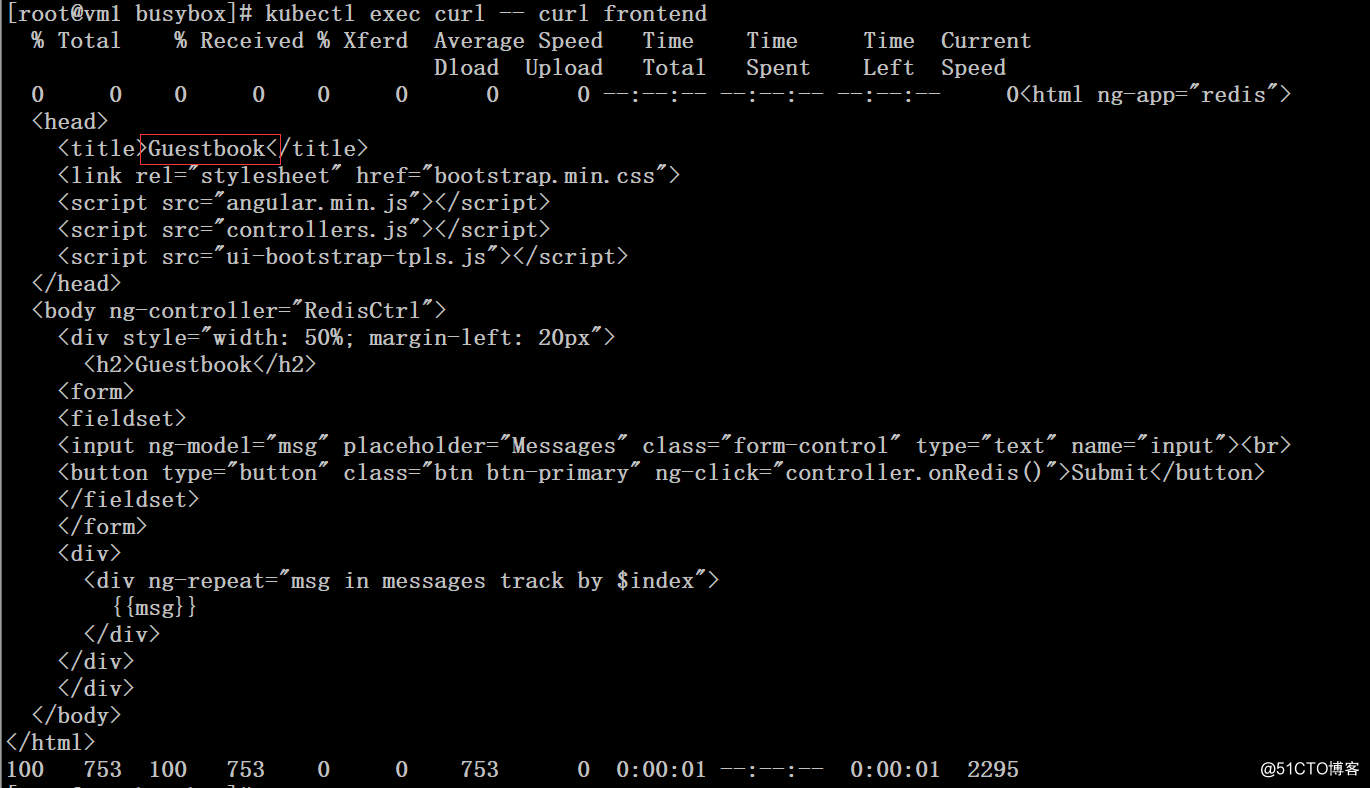

通过curl容器访问前面创建的php留言板

# kubectl exec curl -- curl frontend

kubernetes集群配置dns服务相关推荐

- kubernetes集群内部DNS解析原理

kubernetes集群内部DNS解析原理 当kubernetes初始化完成后,在kube-system名称空间下会出现kube-dns的service服务与coredns的pod $ kubectl ...

- 为私有Kubernetes集群创建LoadBalancer服务

MetalLB - 可以为私有 Kubernetes 集群提供LoadBalancer类型的负载均衡支持. 在Kubernetes集群中,可以使用Nodeport.Loadbalancer和Ingre ...

- 剖析 kubernetes 集群内部 DNS 解析原理

作者 | 江小南 来源 | 江小南和他的小伙伴们 引言 说到DNS域名解析,大家想到最多的可能就是/etc/hosts文件,并没有什么错,但是/etc/hosts只能做到本机域名解析,如果跨机器的解析 ...

- Kubernetes集群配置免费的泛域名证书支持https

前言 kubernetes 集群默认安装的证书是自签发证书,浏览器访问会发出安全提醒. 本文记录了利用 dnspod . cert-manager .let's encrytp 等开源组件,实现泛域名 ...

- kubernetes集群配置Cgroups驱动

Cgroups概念 cgroups 的全称是 Linux Control Groups,主要作用是限制.记录和隔离进程组(process groups)使用的物理资源(cpu.memory.IO 等) ...

- Kubernetes 集群 DNS 服务发现原理

简介:本文介绍 Kubernetes 集群中 DNS 服务发现原理. 本文介绍 Kubernetes 集群中 DNS 服务发现原理. 前提需要 拥有一个 Kubernetes 集群(可以通过 ACK ...

- 使用ingress暴露kubernetes集群内部的pod服务

微信公众号搜索 DevOps和k8s全栈技术 ,关注之后,在后台回复 ingress,就可获取Ingress相关视频和文档,也可扫描文章最后的二维码关注公众号. 回顾 Kubernetes暴露服务的方 ...

- 自动化运维之k8s——Kubernetes集群部署、pod、service微服务、kubernetes网络通信

目录 一.Kubernetes简介 1.Kubernetes简介 2.kubernetes设计架构 3.Kubernetes核心组件 4.kubernetes设计结构 二.Kubernetes部署 1 ...

- Kubernetes 集群DNS选择:CoreDNS vs Kube-DNS

在二进制部署 Kubernetes 集群时,最后一步是部署 DNS,有两个选项:CoreDNS 和 Kube-DNS,二者主要有什么区别,如何选择呢? CoreDNS 和 Kube-DNS 作为 Ku ...

最新文章

- 大数据【四】MapReduce(单词计数;二次排序;计数器;join;分布式缓存)

- linux mysql innodb_MySQL innoDB 存储引擎学习篇

- android adb打开gps,adb 命令行模拟GPS位置信息

- boot lvm 分区_怎样使用kickstart创建逻辑卷管理(LVM)分区

- oracle 随笔数,Oracle数据库随笔

- 哲学家就餐 linux实现_Linux哲学的9个主要原则如何影响您

- mysql +hive 安装

- struts2中文乱码问题

- java 可重入锁 clh_Java并发编程系列-(4) 显式锁与AQS

- 进阶03 System、StringBuilder类

- seaborn—sns.heatmap绘制热力图

- 关于使用weex开发app上线App Store问题

- python 开源爬虫工具 kcrawler 一键爬取 房价 掘金小册专栏

- SVN 配置忽略文件

- 文件没保存怎么恢复?试试这个方法恢复数据

- Java Session对象的钝化和活化

- HCU混动控制器,HEV串并联(IMMD) 混动车辆 simulink stateflow模型包含工况路普输入,驾驶员模型

- 计算机网络ospf实验报告,计算机网络ospf实验报告.pdf

- 【C语言】求一个四位整数各位数字之和

- python 词云学习